Run Docker In Docker

In this blog, we have gone a discuss How to run docker in docker in two different methods.

Docker: Docker is lightweight containerization that allows us to set up an infrastructure within one second with the help of images stored in Docker.

When gone a use Docker in Docker?

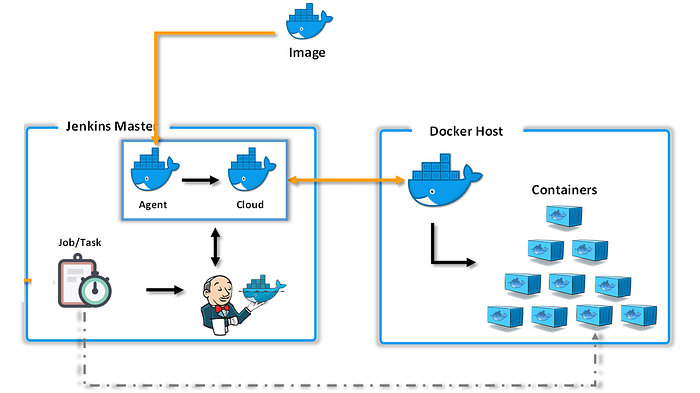

The question of running Docker in a Docker container occurs frequently when using CI tools like Jenkins.

In Jenkins, all the commands in the stages of your pipeline are executed on the agent that you specify. This agent can be a Docker container. So, if one of your commands, for example, in the Build stage, is a Docker command (for example, for building an image), then you have the case that you need to run a Docker command within a Docker container.

Furthermore, Jenkins itself can be run as a Docker container. If you use a Docker agent, you would start this Docker container from within the Jenkins Docker container. If you also have Docker commands in your Jenkins pipeline, then you would have three levels of nested “Dockers”.

However, with the above approach, all these Dockers use one and the same Docker daemon, and all the difficulties of multiple daemons (in this case three) on the same system, that would otherwise occur, are bypassed.

Run Docker in Docker in two methods

- Run docker by mounting docker.sock (DooD Method)

- dind method

Let’s dev into a practical

Prerequisite

Docker Installed

Internet Connection to pull Image

Docker in Docker Using docker.sock

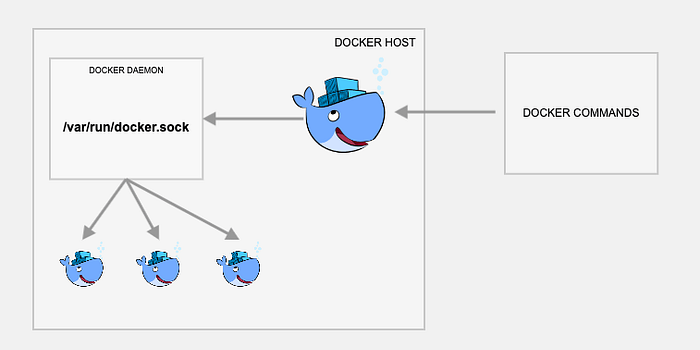

What is docker.sock?

The docker.sock is a default UNIX socket. Sockets are the communication between localhost and processes. Docker default listens to a docker.sock. If your running in localhost then you can use /var/run/docker.sock method to manage containers.

With this approach, a container, with Docker installed, does not run its own Docker daemon but connects to the Docker daemon of the host system. That means, you will have a Docker CLI in the container, as well as on the host system, but they both connect to one and the same Docker daemon. At any time, there is only one Docker daemon running in your machine, the one running on the host system.

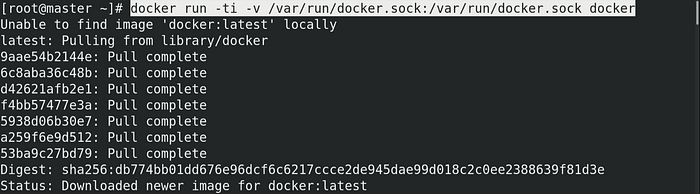

Run docker with the default Unix socket docker.sock as a volume run below command. Here launching one container by mounting /var/run/docker.sock to a same volume(-v) /var/run/docker.sock so this makes to run on same process launching with docker image

>> docker run -ti -v /var/run/docker.sock:/var/run/docker.sock docker

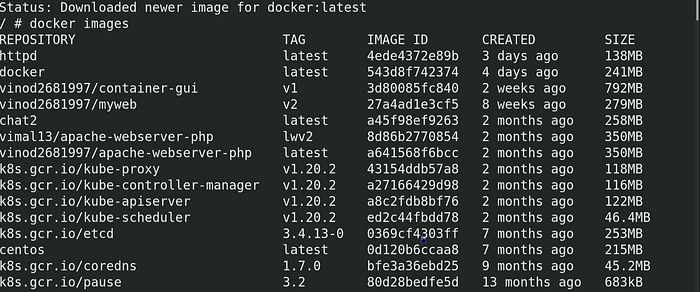

>> docker images

We can observe that. The output is exactly the same as when you run these commands on the host system.

It looks like the Docker installation of the container that you just started, and that you maybe would expect to be fresh and untouched, already has some images cached and some containers running. This is because we wired up the Docker CLI in the container to talk to the Docker daemon that is already running on the host system.

This means, if you pull an image inside the container, this image will also be visible on the host system (and vice versa). And if you run a container inside the container, this container will actually be a “sibling” to all the containers running on the host machine (including the container in which you are running Docker).

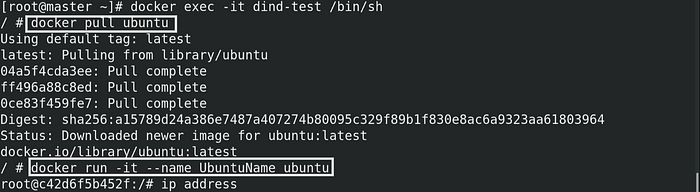

I have pulled Ubuntu Image and launched a Container

>> docker pull ubuntu

>> docker run -it --name

But whatever we do in this container it will be also access to a localhost

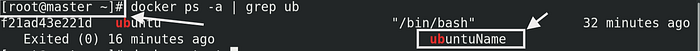

Here we can see ubuntuName Launched container in our Docker in Docker container is visible from the local system

Even we can use this container from the docker container

This looks like Docker-in-Docker, feels like Docker-in-Docker, but it’s not Docker-in-Docker: when this container will create more containers, those containers will be created in the top-level Docker. You will not experience nesting side effects, and the build cache will be shared across multiple invocations.

⚠️ Former versions of this post advised to bind-mount the docker binary from the host to the container. This is not reliable anymore, because the Docker Engine is no longer distributed as (almost) static libraries.

Method-2:

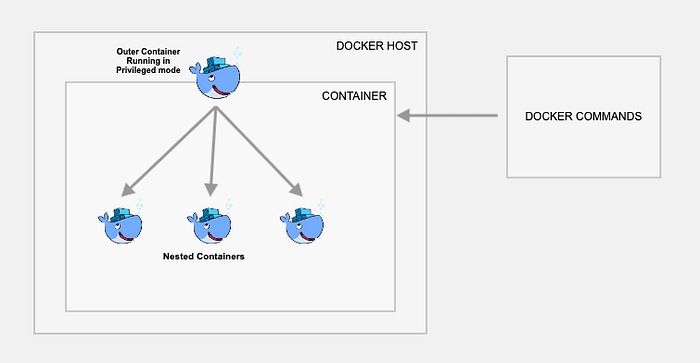

Docker in Docker Using dind

To overcome the above issue where everyone can access our container here is a solution use dind method. This method actually creates a child container inside a container. If you really want to, you can use “real” Docker in Docker, that is nested Docker instances that are completely encapsulated from each other. You can do this with the dind (Docker in Docker) tag of the docker image, as follows:

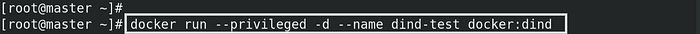

Launch new container using docker image with dind tag(version)

>> docker run --privileged -d --name <container-name> docker:dind- --privileged: Privileged containers in Docker are, concisely put, containers that have all of the root capabilities of a host machine, allowing the ability to access resources that are not accessible in ordinary containers.\

- -d: Detach dind container if you use(-it) for attaching then avoid that for dind if you miss detaching then exit from that container then start and use docker exec -it <container-name> /bin/bash this will run successfully

- docker:dind: docker is an image and dind is an image

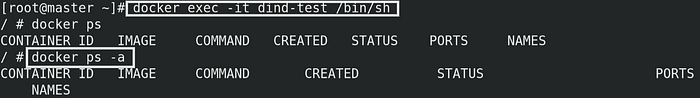

Now we can execute using below command

>> docker exec -it <container-name> <command-to-run>

- exec: It’s used to execute a command here using /bin/sh this gives us new shell

From the above image can observe that no images and containers in this dind container

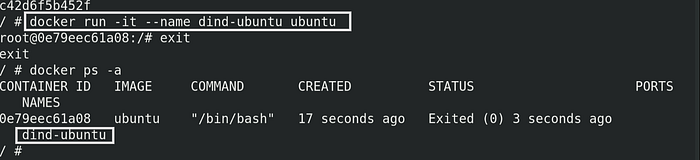

Now inside this docker I am launching ubuntu container with the name of dind-ubunt

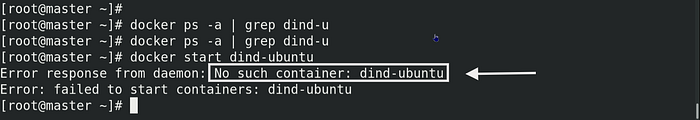

If we go to the host even if we try to find a nested container I mean container inside the launched container we can’t find or we can’t start that container that is because this dind method completly encapsulated from each other

Is Docker is good?

The core team to work faster on Docker development. Before Docker-in-Docker. To help Development Cycle:

- build

- stop the currently running Docker daemon

- run the new Docker daemon

- test

- repeat

And if you wanted a nice, reproducible build (i.e. in a container), it was a bit more convoluted:

- hackity hack

- make sure that a workable version of Docker is running

- build new Docker with the old Docker

- stop Docker daemon

- run the new Docker daemon

- test

- stop the new Docker daemon

- repeat

With the advent of Docker-in-Docker, this was simplified to:

- build+run in one step

- repeat

Much better, right?

Is running Docker in Docker secure?

Some Docker experts say that “Docker-in-Docker is not 100% made of sparkles, ponies, and unicorns.”

What I mean here is that there are a few issues to be aware of.

One is about LSM (Linux Security Modules) like AppArmor and SELinux: when starting a container, the “inner Docker” might try to apply security profiles that will conflict or confuse the “outer Docker.” This was actually the hardest problem to solve when trying to merge the original implementation of the --privileged flag.

For example, One developer explains the issue faced“Changes works (and all tests would pass) on Debian machine and Ubuntu test VMs, but it would crash and burn on Michael Crosby’s machine (which was Fedora if I remember well). I can’t remember the exact cause of the issue, but it might have been because Mike is a wise person who runs with

SELINUX=enforce(I was using AppArmor) and my changes didn’t take SELinux profiles into account.”

Running docker in docker using docker.sock and dind method is less secure as it has complete privileges over the docker daemon.

Guys, here we come to the end of this blog I hope you all like it and found it informative. If have any query feel free to reach me